package org.example.test.qps;

import lombok.extern.slf4j.Slf4j;

import org.example.infrastructure.redis.RedissonService;

import org.junit.Before;

import org.junit.Test;

import org.junit.runner.RunWith;

import org.redisson.api.RLock;

import org.redisson.api.RScript;

import org.redisson.api.RedissonClient;

import org.redisson.client.codec.StringCodec;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.test.context.junit4.SpringRunner;

import javax.annotation.Resource;

import java.util.Collections;

import java.util.concurrent.*;

import java.util.concurrent.atomic.AtomicInteger;

/**

* occupyTeamStock 三种方案 QPS 对比测试

*

* 方案一:无锁化 incr —— 3次 Redis RTT,含恢复量兜底(与生产代码一致)

* 方案二:Lua 脚本 —— 1次 Redis RTT,脚本内部原子执行,基础库存扣减

* 方案三:分布式锁 —— 5次 Redis RTT,全程串行化,无锁释放 Bug

*/

@Slf4j

@RunWith(SpringRunner.class)

@SpringBootTest

public class OccupyTeamStockQpsTest {

@Resource

private RedissonService redissonService;

// Lua 脚本需要直接操作 RScript,RedissonService 没有封装该接口,直接注入 Client

@Resource

private RedissonClient redissonClient;

// -------- 公共参数 --------

private static final String STOCK_KEY = "qps_test_team_stock";

private static final String RECOVERY_KEY = "qps_test_team_stock_recovery"; // 仅方案一使用

private static final String LUA_STOCK_KEY = "qps_test_team_stock_lua"; // 仅方案二使用(扣减模式)

private static final int TARGET = 100_000; // 足够大,避免全部超卖挡住性能测量

private static final int VALID_TIME = 3600; // 秒

private static final int THREAD_COUNT = 200; // 并发线程数

private static final int TOTAL_REQUESTS = 10_000; // 总请求次数

@Before

public void resetKeys() {

// 每个 @Test 独立清空,保证数据互不干扰

redissonService.remove(STOCK_KEY);

redissonService.remove(RECOVERY_KEY);

redissonService.remove("occupy_lock_" + STOCK_KEY);

// 方案二 Lua 采用“扣减逻辑”,需预先放入总库存

// 使用 StringCodec 确保 Lua 脚本中 tonumber 可以正常解析

redissonClient.getBucket(LUA_STOCK_KEY, StringCodec.INSTANCE).set(String.valueOf(TARGET));

}

// ============================================================

// 方案一:无锁化 incr(与生产代码完全一致,含恢复量)

// ============================================================

@Test

public void test_qps_noLock() throws InterruptedException {

runQpsTest("【方案一 无锁化 incr(含恢复量)】", this::occupyTeamStockNoLock);

}

private boolean occupyTeamStockNoLock() {

// step1: 读取恢复量(非原子读,高并发下可能读到旧值)

Long recoveryCount = redissonService.getAtomicLong(RECOVERY_KEY);

recoveryCount = (recoveryCount == null) ? 0L : recoveryCount;

// step2: 原子自增占位;+1 是因为 team 创建时已有一人占用

long occupy = redissonService.incr(STOCK_KEY) + 1;

if (occupy > TARGET + recoveryCount) {

// 超卖:此 incr 槽位作废,恢复量 +1 让后续请求可以复用槽位范围

redissonService.incr(RECOVERY_KEY);

return false;

}

return true;

}

// ============================================================

// 方案二:Lua 脚本(基础库存扣减)

// ============================================================

private static final String LUA_DEDUCT_STOCK =

"local stockKey = KEYS[1]\n" +

"local deductCount = tonumber(ARGV[1])\n" +

"local currentStock = redis.call('GET', stockKey)\n" +

"if not currentStock then\n" +

" return -1\n" + // 缓存不存在

"end\n" +

"if tonumber(currentStock) < deductCount then\n" +

" return 0\n" + // 库存不足

"end\n" +

"redis.call('DECRBY', stockKey, deductCount)\n" +

"return 1\n"; // 扣减成功

@Test

public void test_qps_lua() throws InterruptedException {

runQpsTest("【方案二 Lua 脚本(基础库存扣减)】", this::occupyTeamStockLua);

}

private boolean occupyTeamStockLua() {

// StringCodec 保证 key/argv 以字符串形式传给 Redis

Long result = redissonClient.getScript(StringCodec.INSTANCE)

.eval(

RScript.Mode.READ_WRITE,

LUA_DEDUCT_STOCK,

RScript.ReturnType.INTEGER,

Collections.singletonList(LUA_STOCK_KEY), // KEYS[1]

"1" // ARGV[1]: 每次扣减 1

);

return result != null && result == 1L;

}

// ============================================================

// 方案三:分布式锁(Redisson RLock)

// 已修复 unlock 逻辑 Bug

// ============================================================

@Test

public void test_qps_distributedLock() throws InterruptedException {

runQpsTest("【方案三 分布式锁(Redisson RLock)】", this::occupyTeamStockDistributedLock);

}

private boolean occupyTeamStockDistributedLock() {

RLock lock = redissonService.getLock("occupy_lock_" + STOCK_KEY);

try {

// waitTime=3s(最多等待获锁时间),leaseTime=5s(持锁超时自动释放,防止宕机死锁)

if (lock.tryLock(3, 5, TimeUnit.SECONDS)) {

try {

Long current = redissonService.getAtomicLong(STOCK_KEY);

current = (current == null) ? 0L : current;

if (current >= TARGET) {

return false;

}

redissonService.incr(STOCK_KEY);

return true;

} finally {

// 【Bug修复】只在成功获取到锁后,才执行 unlock

lock.unlock();

}

} else {

// 等待超时未获取到锁,直接返回失败

return false;

}

} catch (InterruptedException e) {

Thread.currentThread().interrupt();

return false;

}

// 【Bug修复】去除了外层错误的 finally { lock.unlock(); }

}

// ============================================================

// 通用 QPS 测试框架

// ============================================================

private void runQpsTest(String label, Callable<Boolean> task) throws InterruptedException {

ExecutorService pool = Executors.newFixedThreadPool(THREAD_COUNT);

CyclicBarrier barrier = new CyclicBarrier(THREAD_COUNT);

CountDownLatch latch = new CountDownLatch(TOTAL_REQUESTS);

AtomicInteger successCount = new AtomicInteger(0);

AtomicInteger failCount = new AtomicInteger(0);

AtomicInteger errorCount = new AtomicInteger(0);

long startTime = System.currentTimeMillis();

for (int i = 0; i < TOTAL_REQUESTS; i++) {

pool.submit(() -> {

try {

barrier.await();

boolean result = task.call();

if (result) successCount.incrementAndGet();

else failCount.incrementAndGet();

} catch (Exception e) {

errorCount.incrementAndGet();

log.error("{} 执行异常", label, e);

} finally {

latch.countDown();

}

});

}

latch.await(60, TimeUnit.SECONDS);

long elapsed = System.currentTimeMillis() - startTime;

pool.shutdown();

double qps = TOTAL_REQUESTS * 1000.0 / elapsed;

double avgRt = (double) elapsed / TOTAL_REQUESTS;

log.info("=========================================");

log.info("测试方案: {}", label);

log.info("并发线程数: {}", THREAD_COUNT);

log.info("总请求数: {}", TOTAL_REQUESTS);

log.info("成功数: {}", successCount.get());

log.info("失败数(超限): {}", failCount.get());

log.info("异常数: {}", errorCount.get());

log.info("总耗时: {} ms", elapsed);

log.info("QPS: {}", String.format("%.2f", qps));

log.info("平均 RT: {} ms/req", String.format("%.3f", avgRt));

log.info("=========================================");

}

}

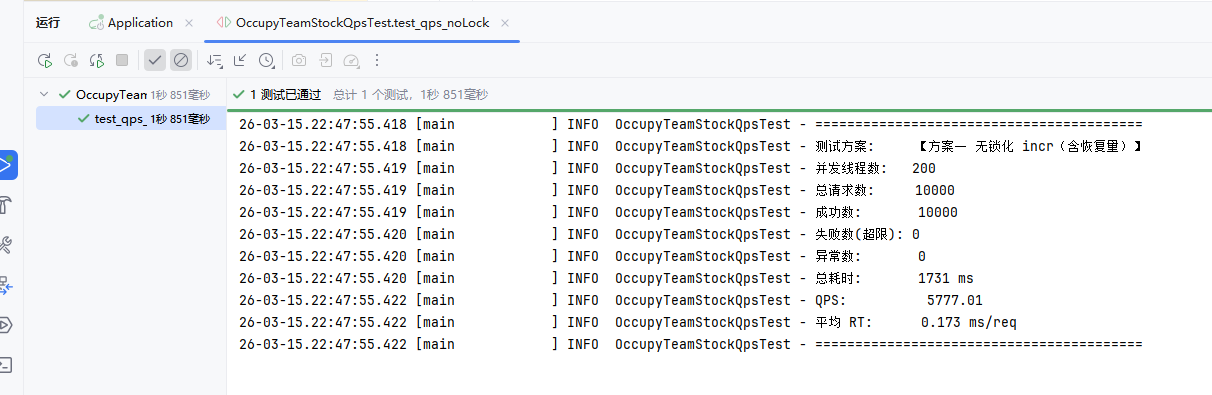

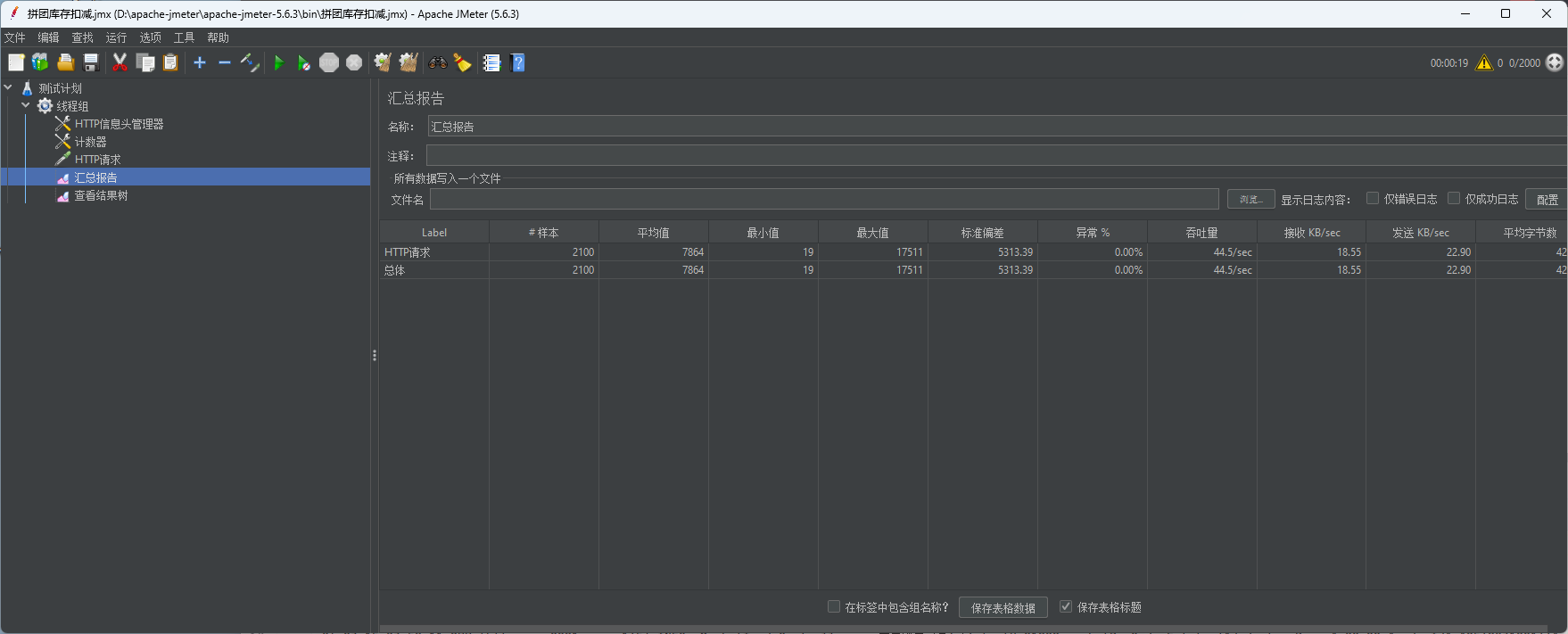

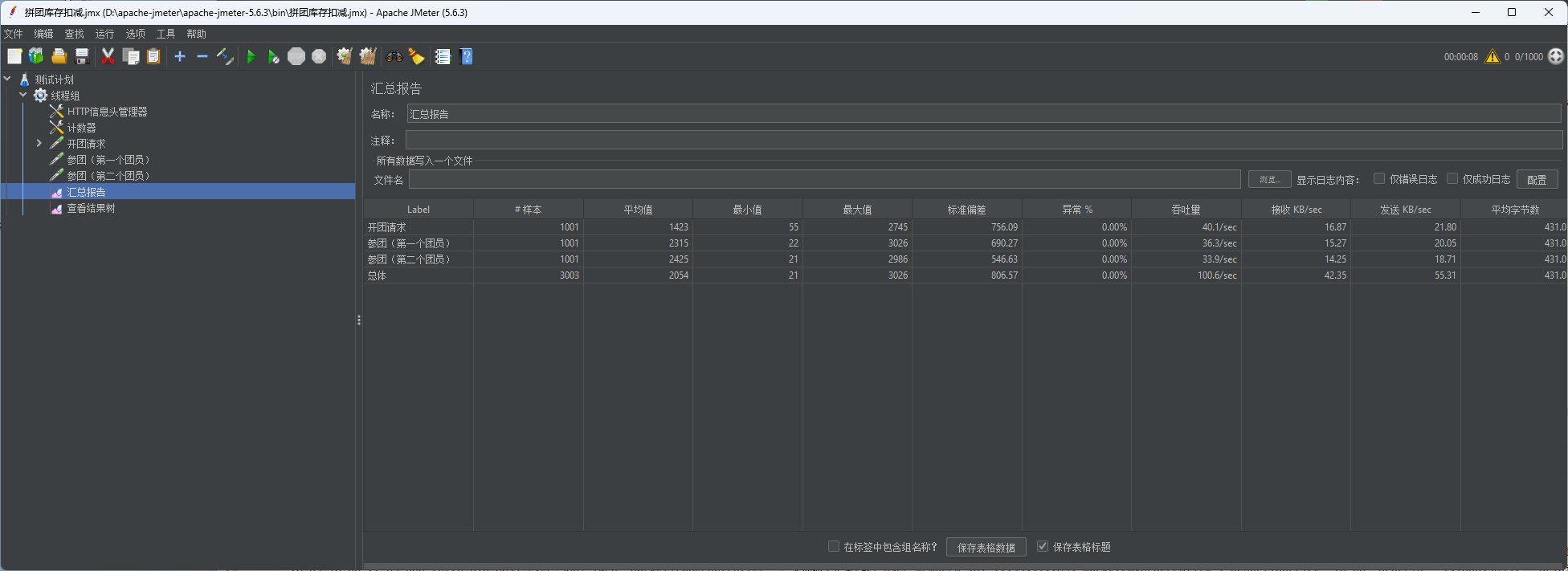

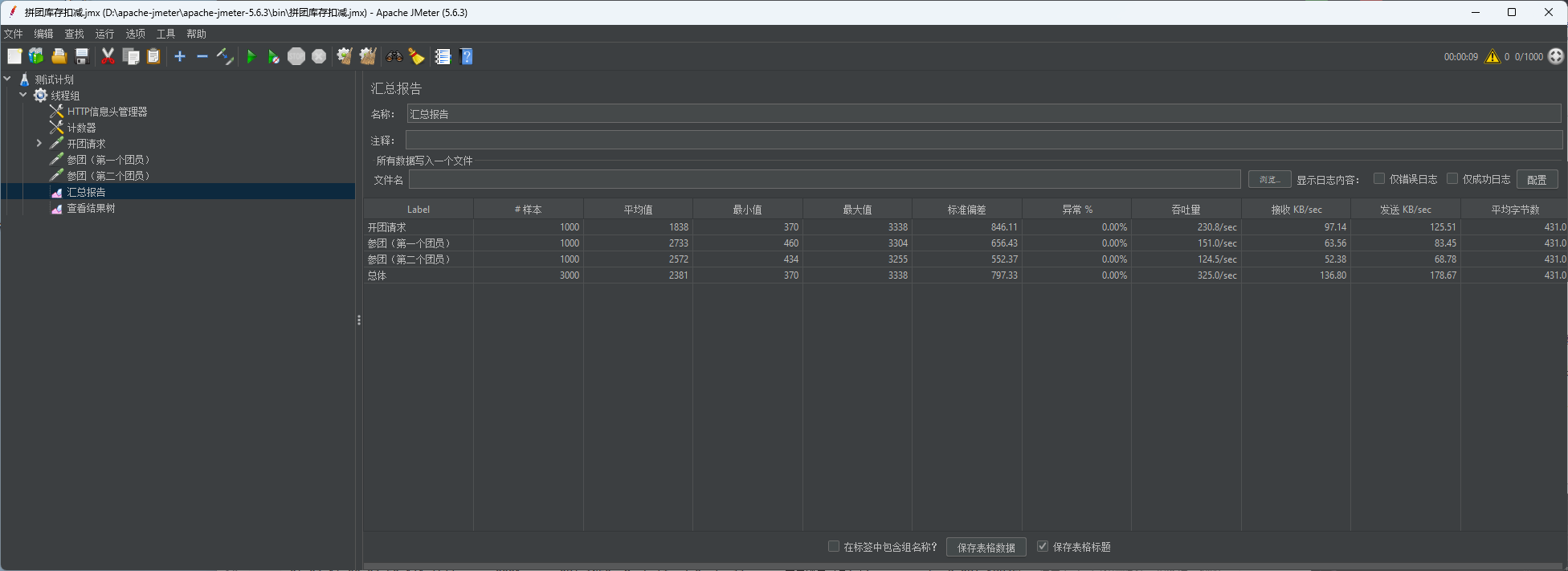

先测试无锁化库存扣减

一个请求消耗是0.26ms,QPS达到六千左右

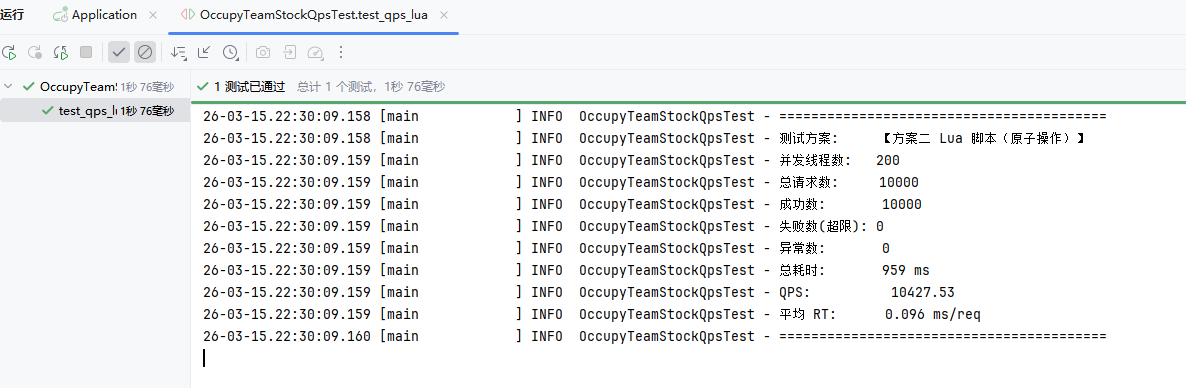

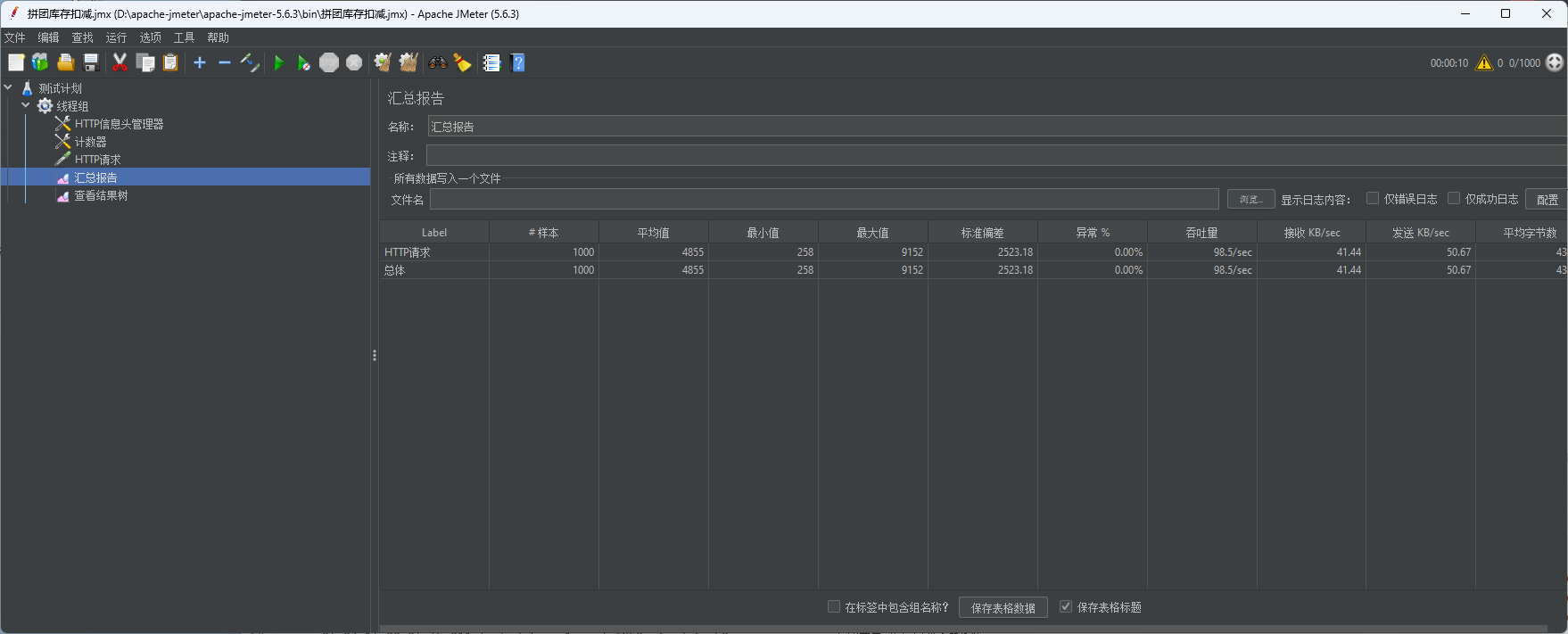

Lua脚本

Lua脚本的QPS是一万多了

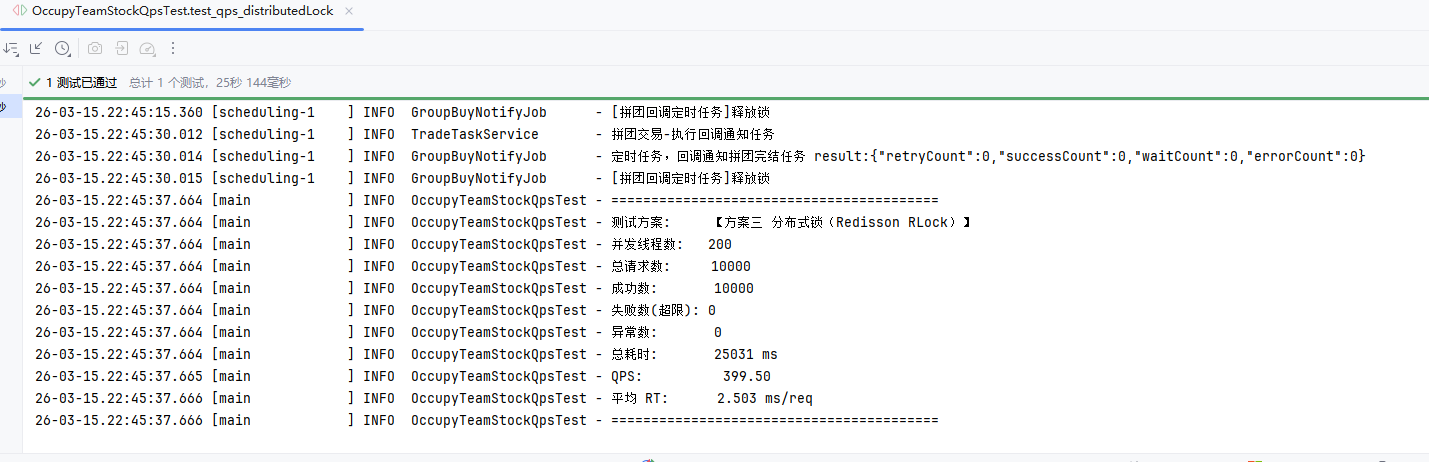

分布式锁

分布式锁的QPS就只有399了

分析

主要因素还是网络延迟吧,Lua脚本解析的代价是次要的。

- Lua 脚本:1次RTT + Redis端绝对原子性 = 性能最高。

- 无锁化 incr:2~3次RTT = 性能减半。

- 分布式锁:加锁/解锁本身需要复杂的Lua脚本(约消耗2次RTT),加上业务的GET和INCR(2次RTT),以及强烈的线程排队竞争,导致性能断崖式下跌。

Redis 执行核心命令始终是单线程的。无论你使用“无锁化”还是“Lua脚本”,在 Redis 引擎内部,所有的命令全部都是排队串行执行的。完全不存在“多个请求在 Redis 内部并行扣减”的情况。

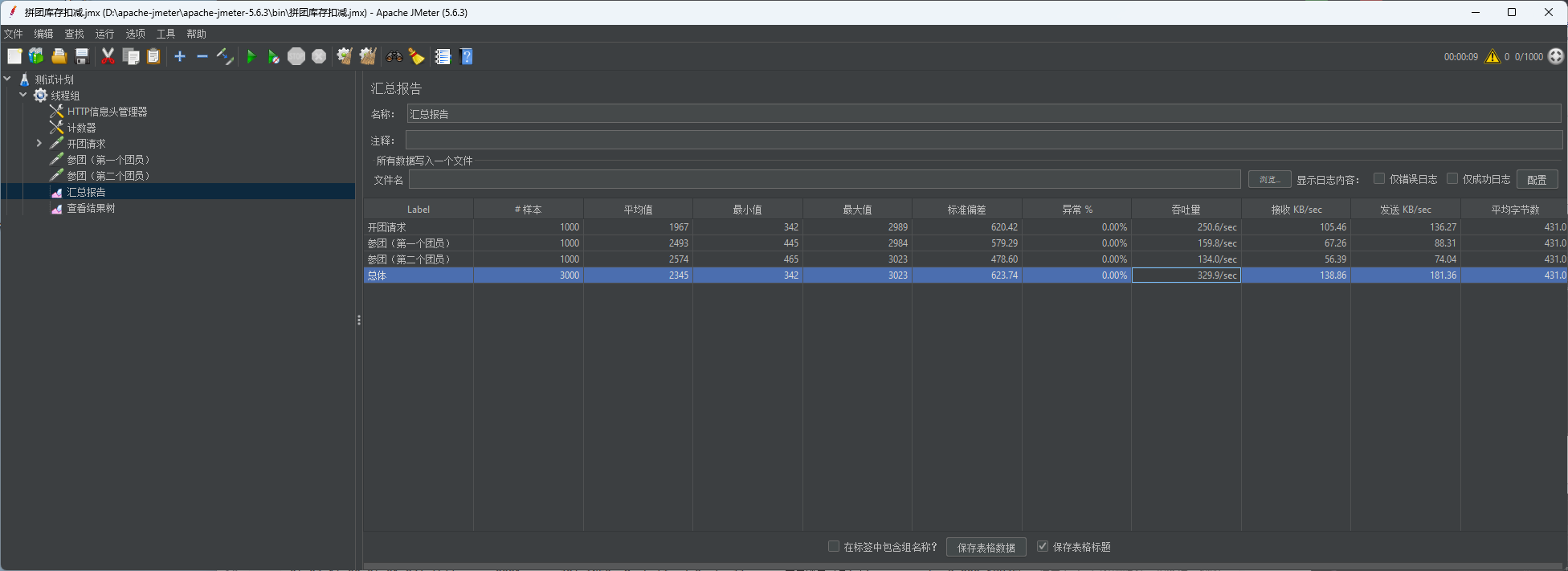

直接去测接口的QPS好低,只有44.5QPS

这个是对同一个组队名额在数据库中有扣减,就会导致MySQL上锁等待

但是实际上应当测试多个队伍名额扣减,而不是单个队伍

应当对每个队伍扣3次就行,pdd拼团也是3次,然后队伍数量越多,QPS就应该越多

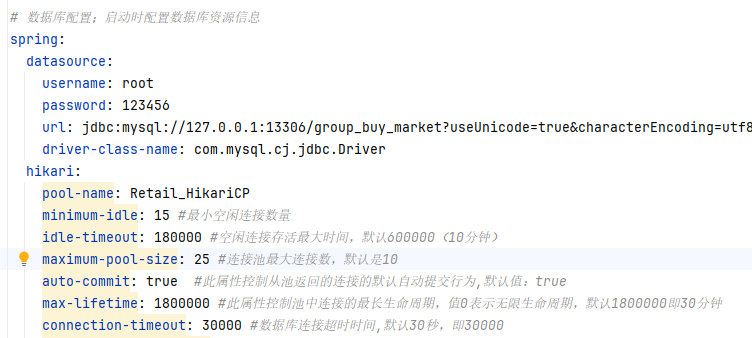

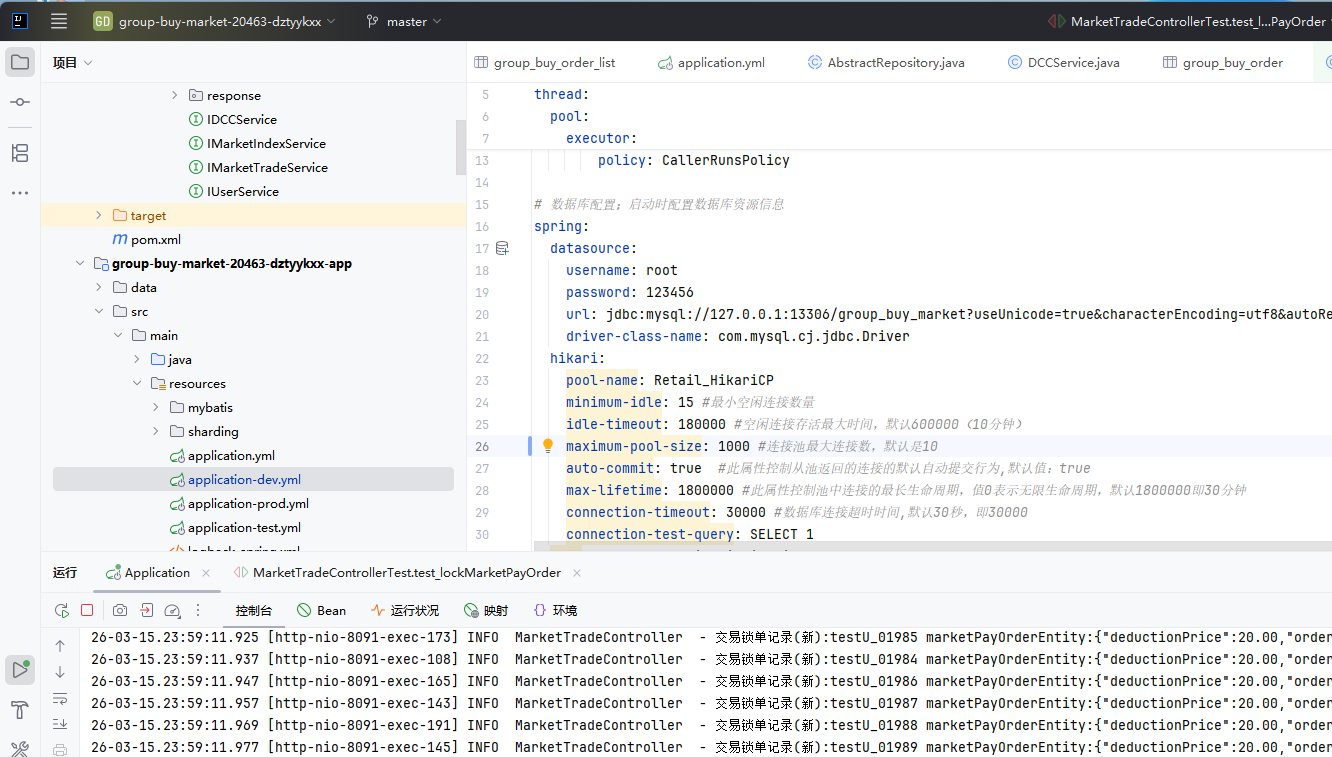

最大连接25,QPS100

最大连接500,QPS 325

最大连接1500,QPS 329

目前看来瓶颈就是写库了,要进一步提高并发,还是得扣减成功后直接投递到MQ中就返回,后续再异步落库